We know that manual investigations don’t scale. Alert volume keeps growing, and analysts can’t keep up, which is why autonomous investigation is becoming essential in the SOC.

When alert volume keeps growing, teams turn to AI tools. But an AI tool that tells you an alert is malicious without showing you what it checked, why it asked each question, or how it reached its conclusion doesn’t reduce analyst workload. It just relocates it.

Autonomous investigation only reduces workload when every step is visible.

Vega is built transparently: every query, every piece of evidence, and every decision is fully visible. The result: less noise, clearer context, and faster, more focused response.

Let’s look at an example.

End-to-end example: investigating an SMS MFA bypass

The investigation begins with a single alert:

Authentication Success After Multiple Failed MFA Attempts, targeting user ID

00u2kd9f7xANGELDOYLE

On its own, this signal indicates suspicious authentication behavior, but it does not provide enough context to determine whether this is benign activity or a potential account compromise.

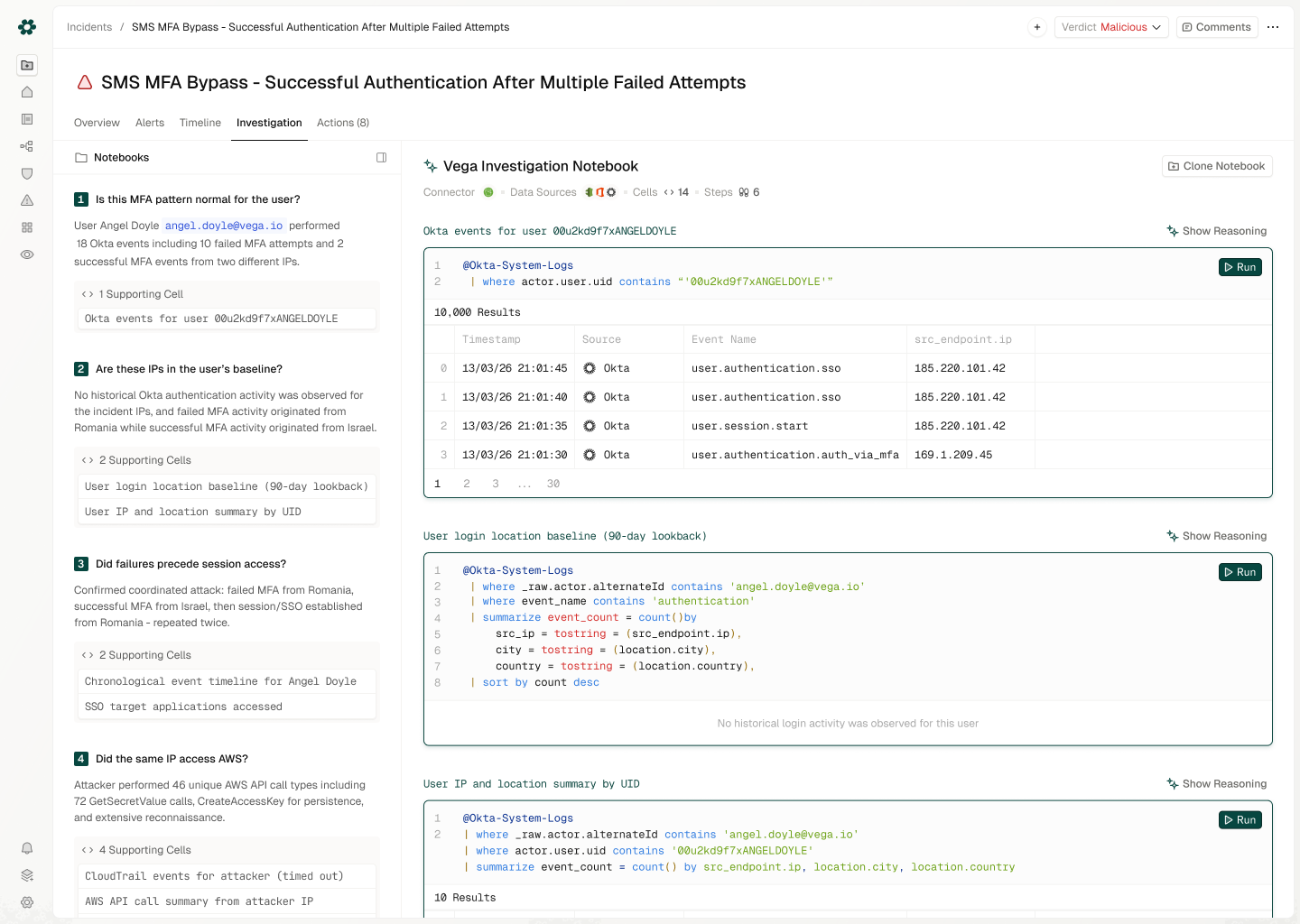

Vega starts by asking a simple question: is this behavior normal for this user?

To answer that, it translates the question into queries over Okta logs, first identifying the user behind the ID and then analyzing their historical login patterns and location baseline.

@Okta-System-Logs

| where actor.user.uid contains '00u2kd9f7xANGELDOYLE'

Followed by:

@Okta-System-Logs

| where _raw.actor.alternateId contains '[email protected]'

| where event_name contains 'authentication'

| summarize event_count = count() by

src_ip = tostring(src_endpoint.ip),

city = tostring(location.city),

country = tostring(location.country)

| sort by event_count desc

The results reveal 10 failed MFA attempts from Romania, followed by 2 successful MFA attempts from Israel, with no prior history from either location.

Vega interprets this as anomalous behavior and raises the hypothesis of a coordinated MFA bypass.

From there, the investigation continues: how did access actually occur?

Vega analyzes the authentication timeline and SSO activity, uncovering a repeated pattern: failed MFA attempts followed by successful session creation and SSO access. This confirms that the attacker was able to establish a session and move laterally into cloud applications.

With initial access confirmed, Vega expands the scope: what happened after login?

It queries cloud activity across AWS CloudTrail, focusing on the user and the suspicious source IP:

@CloudTrail

| where _raw.userIdentity.arn contains 'angel.doyle'

or src_endpoint.ip == '185.220.101.42'

The results show secret access, API reconnaissance, and the creation of an IAM access key. At this stage, Vega concludes this is no longer limited to identity misuse. It is a cloud compromise with persistence established.

Finally, Vega asks: what data was accessed, and what is the impact?

It investigates Microsoft 365 and Entra activity, identifying:

- Creation of an external email forwarding rule

- Access to 114 sensitive files including VPN credentials and database connection strings

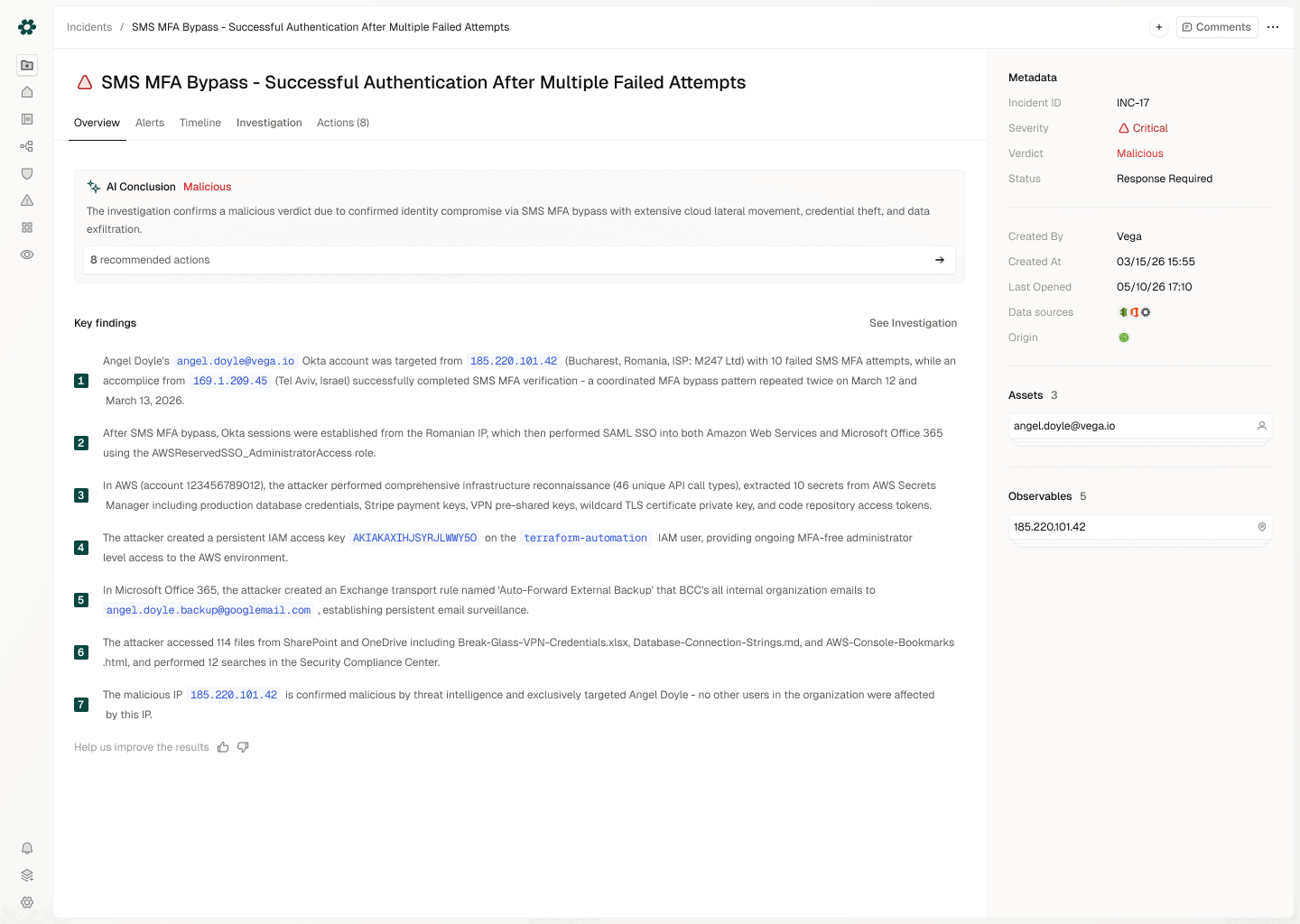

Final verdict: A compromised account was used to bypass MFA, establish persistent access, move across cloud services, and exfiltrate sensitive data.

By correlating identity, cloud, and SaaS activity, Vega builds a complete attack narrative.

What analysts actually see

Overview: the full incident picture

Instead of reviewing isolated alerts, analysts start from an Overview tab where related signals are already stitched together into a single, coherent incident.

The Overview gives analysts the full picture upfront:

- Final verdict: the investigation outcome and confidence level

- Key findings: the most important evidence and conclusions

- Recommended actions: clear next steps for response

- Incident context: affected assets, observables, properties, and related alerts

- Timeline: a unified sequence of activity including original alerts and evidence collected during the investigation

This gives analysts a clear starting point before they decide whether to review the deeper investigation path.

Step-by-step investigation

Every investigation includes a full breakdown of:

- Questions asked

- Queries executed

- Evidence collected

- Reasoning behind each step

How Vega AI investigation works

Vega operates as an iterative investigation agent: asking questions, retrieving data, analyzing results, and determining the next step.

Each investigation follows a structured loop:

- Ask: form hypotheses and define investigative questions

- Translate: convert questions into queries across relevant data sources

- Analyze: execute queries and process results

- Reason: interpret findings and assess impact

- Repeat: continue exploring based on new evidence

- Verdict: deliver a final, evidence-backed conclusion

This approach allows Vega to dynamically explore incidents, correlate signals across systems, and build a complete understanding before reaching a conclusion.

A focused incident queue

Analysts don’t work through a noisy alert backlog. Vega surfaces a small, prioritized queue of incidents that actually require attention.

Lower-confidence or less relevant alerts are still triaged and fully transparent, but they don’t clutter the main workflow. This allows teams to focus on the alerts that actually deserve analyst time.

Why trust Vega AI investigation?

Trust starts with two basic things: visibility and context.

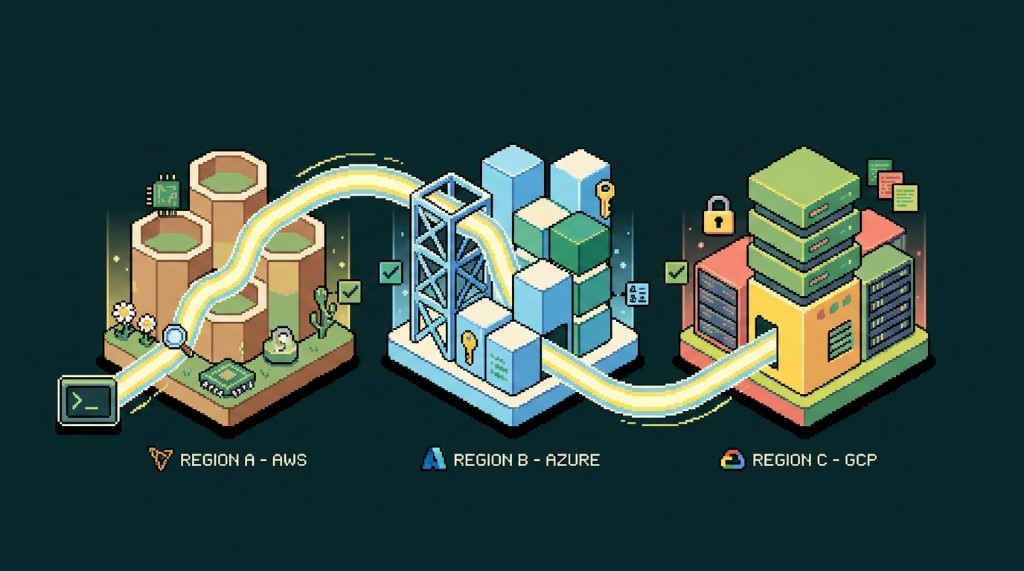

Vega learns your environment and understands where relevant data lives and what coverage exists across your stack. It doesn’t rely on a single source. Instead, it dynamically pulls from across your security ecosystem.

For example, Vega can use Axonius to understand device function, ownership, and sensitivity. Or Vega can use Okta to enrich identity context like user title, department, and group membership. This allows the investigation to assess not just what happened, but also how critical it is.

Every investigation is fully transparent. Analysts can see the exact questions asked, the queries executed, the evidence collected, and the reasoning behind each step.

Trust is also reinforced through control. Analysts can provide feedback on every step and decision in the investigation, helping Vega continuously improve and better align with your organization’s environment.

The result is an investigation process that is explainable, context-aware, and continuously improving.

Closing thoughts: why transparency is the new baseline

Autonomous investigation isn’t just about faster answers. It’s about making those answers usable. If an analyst gets a verdict without being able to see the full decision chain and the raw telemetry behind it, they will spend time trying to reconstruct that evidence themselves.

This isn’t time well spent. It’s time wasted.

Vega removes that step entirely by making every part of the investigation visible, explainable, and grounded in real data. With Vega’s federated analytics layer, this visibility comes without centralizing all the data. Instead, your data remains in its original systems while still being fully accessible during the investigation.

By combining transparency, full organizational context, and continuous feedback, Vega enables teams to move from investigation to decision with confidence. Without the need to second-guess or re-do the work.

If your AI investigation tools ask you to trust the verdict, ask for the receipts. Vega’s come with them by default. Schedule a demo to walk through one in your environment.